The graveyard of failed startups is filled with products that took too long to build, cost too much to develop, and solved problems nobody had. The pattern is consistent enough to be a cliché: a founder has an idea, spends six to eighteen months building a polished product in private, launches with fanfare, and discovers that the assumptions underlying the product were wrong in ways that months of customer conversation before building could have identified in days. The average time from founding to seed round increased from 8 months in 2021 to 19 months in 2025 — partly reflecting investor caution, but primarily reflecting founders taking longer to reach the validated traction that seed investors now require. Seventy-eight percent of B2B seed deals in 2025 required at least $10,000 MRR or 1,000 engaged users before investment. Pre-product raises are nearly extinct outside repeat founders with track records. Traction is the currency; and an MVP is how you generate traction before you have built the full product.

In 2026, two things are simultaneously true about building an MVP. The cost of building has dropped dramatically — AI-assisted engineering, no-code platforms, and a mature ecosystem of API integrations mean that founders can build functional products faster and cheaper than at any previous moment in the history of software. Startups using AI during their MVP phase are 40 percent more likely to find product-market fit and iterate 60 percent faster than those doing everything manually. At the same time, the cost of attention has skyrocketed — the number of products competing for users’ time, trust, and adoption decision is greater than ever, and the bar for what constitutes a compelling enough product to generate word-of-mouth and organic growth has risen accordingly. Building fast is now table stakes. Building the right thing — the minimum set of features that genuinely solve a real problem for a specific customer — requires the kind of upfront customer discovery and hypothesis discipline that most first-time founders skip in their rush to launch.

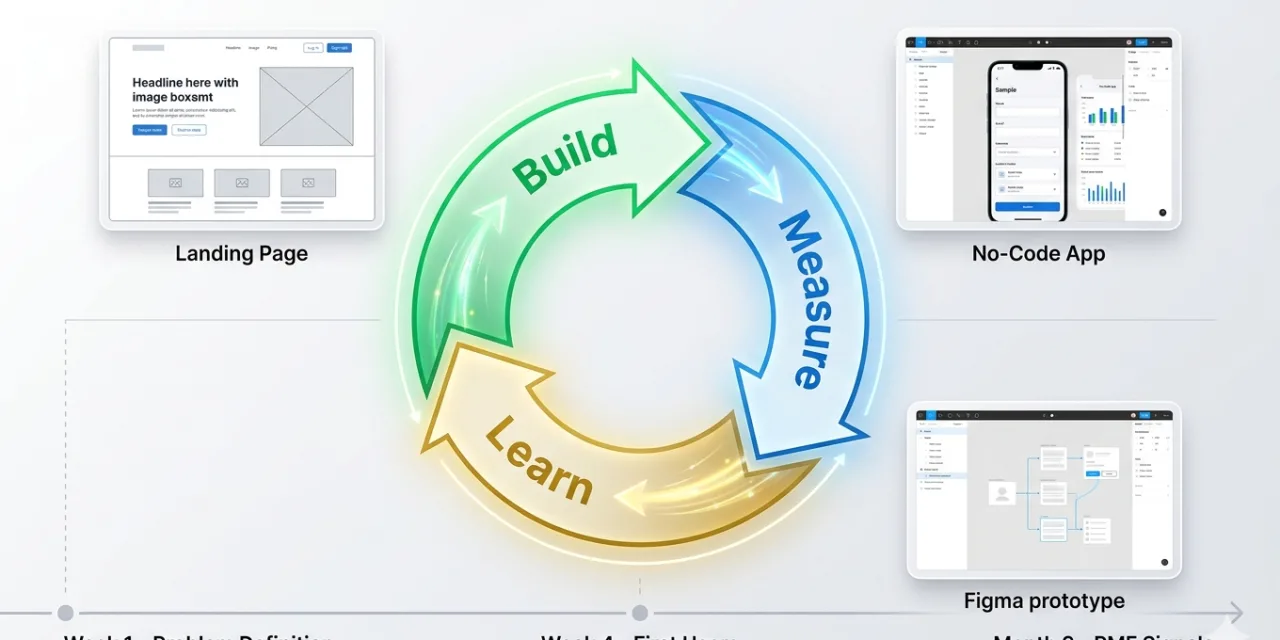

This guide covers the complete lean startup methodology for building an MVP in 2026: what an MVP actually is (and what it is not), the build-measure-learn loop that defines the lean approach, the six steps from idea to first users, the no-code versus custom build decision, how to measure whether the MVP is working, the pivot-or-persevere decision, and the most common MVP mistakes to avoid. If you are preparing to build your first product, this is the framework that maximises the probability that what you build is something people actually want.

What an MVP Actually Is — and What It Is Not

The term Minimum Viable Product, first detailed by Frank Robinson and popularised by Eric Ries in his 2011 book The Lean Startup, is among the most frequently misunderstood concepts in startup practice. Two persistent misunderstandings undermine most founders’ MVP approach before they begin.

The first misunderstanding is treating the MVP as a stripped-down version of the full product — a normal product development process, but with fewer features. This misunderstands what makes an MVP a learning tool. An MVP is not defined primarily by its feature count. It is defined by the question it is designed to answer. Every MVP decision — which features to include, what the user experience looks like, which customer segment to target — should be made in service of answering the specific core hypothesis that determines whether the business can work. Dropbox’s MVP was not a stripped-down version of Dropbox. It was a three-minute explainer video that demonstrated the product concept and measured whether people wanted what Dropbox was describing badly enough to sign up for early access — before a line of code was written. Airbnb’s MVP was not a stripped-down booking platform. It was a simple website where the founders personally photographed apartments and manually managed bookings to test whether strangers would pay to stay in other strangers’ homes. The MVP is a learning tool, not a product milestone.

The second misunderstanding is treating “minimum” as meaning “mediocre.” A minimum viable product is not a buggy, incomplete, or low-quality product. It is a product that does one thing — the most important thing — well. Reid Hoffman’s famous line captures the right instinct: “If you are not embarrassed by the first version of your product, you have launched too late.” The embarrassment is about breadth, not quality. The first version of Slack did not have most of what Slack has today. But it worked exceptionally well for what it did — it was genuinely useful for small teams communicating around shared projects — and that genuine usefulness is what generated the word-of-mouth that drove early growth. A minimum viable product that does one thing poorly is not an MVP. It is a bug.

The clearest definition: an MVP is the smallest version of your product that delivers genuine value to a specific type of customer and generates the learning data needed to validate or invalidate the core business hypothesis. Smaller than that is a prototype (which tests usability but not value delivery). Larger than that is a product (which has invested in features whose value has not yet been validated). The MVP occupies precisely the space between them.

The Build-Measure-Learn Loop: How Lean Startups Work

The Lean Startup’s core operational model is the build-measure-learn feedback loop — a cycle of creating, testing, and iterating that moves the startup from hypothesis to validated learning as quickly as possible. Every pass through the loop answers questions and generates new ones; the goal is to maximise the number of learning cycles per unit of time and capital rather than to maximise the sophistication of what is built in any single cycle.

The loop begins not with Building but with Learning: identifying the most important question the next build cycle needs to answer. What is the core assumption that most endangers the business model if it is wrong? Is it that potential customers have the problem you think they have? That they experience it frequently enough to seek a solution? That they would pay for your solution? That your solution actually delivers the outcome it claims to? The assumption most likely to destroy the business if wrong is the one that should be tested first. Validated learning — the process of empirically proving or disproving a specific hypothesis about customers — is the only form of progress that the lean startup framework treats as genuinely valuable. Features shipped are not progress. Validated learning is progress.

Build means creating the minimum experiment needed to test the assumption identified in the learning step. Sometimes this is a product feature. Sometimes it is a landing page. Sometimes it is a concierge process (manual delivery of the outcome the product would eventually automate). The question to ask before building anything is: “What is the fastest, cheapest way to find out whether this assumption is right?” The answer is often not a software feature. Zappos tested the assumption that people would buy shoes online without trying them on by manually purchasing shoes from physical stores and shipping them to online customers — before building any inventory management or logistics infrastructure. The concierge approach generated real behaviour data (did customers buy and keep the shoes?) at near-zero infrastructure cost.

Measure means collecting the data that tests the hypothesis — specifically, the actionable metrics that reveal whether the assumption was correct, not the vanity metrics that look impressive but provide no decision-making guidance. Signing up for an email list validates interest; completing the core workflow in the product validates value delivery; paying for the product validates willingness to pay. Each of these is a different and more demanding test than the previous one, and each generates more reliable evidence about whether the business can work.

Learn means extracting the genuine conclusion from the measurement data and using it to determine the next step. Did the experiment validate the hypothesis? If yes, proceed to the next most important assumption with the same rigour. If no, was it because the hypothesis was wrong (requiring a pivot to a different assumption or approach) or because the execution was wrong (requiring a new test of the same hypothesis with better execution)?

Step One: Define the Problem Before Touching a Product

The most valuable investment a founder can make before building anything is confirming that the problem they intend to solve is real — that it exists in the world as an actual painful experience for real people, experienced frequently enough to motivate them to seek and pay for a solution. The graveyard of failed startups is filled with products that solved problems that existed only in the founder’s head: problems that were real but rare, real but mild, or real but already adequately solved by existing alternatives.

The customer discovery process that validates a problem before building involves 20 to 30 structured interviews with people who match the target customer profile. The interviews are designed to understand the customer’s current behaviour, not to pitch the product idea — asking about how they currently address the problem, how often they face it, what they have already tried, and how much the problem costs them in time, money, or frustration. The critical discipline is not asking whether they would use your product (people systematically overstate hypothetical purchase intent) but observing whether their current behaviour reveals a problem worth solving: are they using multiple incomplete tools as workarounds? Are they hiring someone to do manually what your product would automate? Are they paying for an expensive alternative that your product would undercut on price or outperform on quality?

The painkiller versus vitamin distinction is the most useful frame for evaluating problem severity. Vitamins are nice to have — they improve a situation that is basically fine without them. Painkillers solve acute problems that the customer is actively motivated to address. Antibiotics — the most valuable category — solve urgent, systemic problems that the customer cannot afford to leave unaddressed. An MVP for a painkiller or antibiotic starts with motivated customers who are predisposed to adopt. An MVP for a vitamin requires creating demand rather than capturing existing demand — a fundamentally harder and more capital-intensive growth challenge. Customer discovery should reveal which category the problem falls into before any building begins.

Step Two: Define the Core Hypothesis and Success Criteria

Before building, define the specific hypothesis that the MVP will test and the specific measurement that will confirm or deny it. A vague hypothesis produces vague learning. “We think people want our product” is not a testable hypothesis. “We believe that at least 30 percent of users who complete the core onboarding workflow will return and use the product again within seven days” is a testable hypothesis with a specific success criterion and a clear measurement methodology.

The hypothesis should identify the riskiest assumption — the one that most threatens the business model if wrong. In the early stages, this is usually about demand: do enough people have this problem badly enough to seek a solution? Later, it shifts to engagement: do users who try the product find it valuable enough to keep using it? And later still, to monetisation: will users pay the price required for the business model to work? Testing these assumptions in order — demand before engagement, engagement before monetisation — prevents the common failure mode of building an elaborate monetisation model around a product that users find interesting but not valuable enough to keep using.

Step Three: Scope the MVP to the Core Feature Set

A successful MVP has three to five core features that solve one specific problem exceptionally well. If the feature list has more than seven items, it is a full product, not an MVP. The discipline of the scoping process is eliminating everything that is not essential to testing the core hypothesis — not deferring it, but actively removing it from scope and confirming that its absence does not prevent the MVP from delivering the core value proposition.

The MoSCoW framework — Must Have, Should Have, Could Have, Won’t Have — is the standard tool for MVP scoping. Must Have features are those without which the product cannot deliver the core value proposition: the MVP is not viable without them. Should Have features are important improvements that meaningfully enhance the experience but whose absence does not prevent value delivery. Could Have features are nice additions that can wait. Won’t Have features are explicitly out of scope for this build cycle. The discipline is in the ruthlessness of the Must Have definition — challenging every proposed Must Have with the question “can we deliver the core value without this?” and removing anything for which the answer is yes.

The user journey mapping that supports MVP scoping identifies the two to three critical paths users will take through the product: the onboarding flow from sign-up to first value delivery, the core action flow of the primary task users perform repeatedly, and the feedback loop through which users report issues or request features. For each flow, every step that is not essential to completing the flow is a candidate for removal from the MVP scope. Friction in these core flows is the primary reason MVPs fail to validate their hypotheses — not because the hypothesis was wrong but because users could not reach the experience that would have validated it.

Step Four: No-Code vs Custom Build — The 2026 Decision Framework

The no-code versus custom development decision is one of the most practically important choices in MVP development, and the 2026 landscape has shifted it significantly in favour of no-code for a broader set of use cases than was viable two to three years ago. No-code and low-code platforms — Bubble and Webflow for web applications, Glide and Adalo for mobile, Softr for data-driven tools, Zapier and Make for automation — can now build functional MVPs that would have required significant engineering resources in 2020, in a fraction of the time and cost.

No-code MVPs cost $5,000 to $15,000 in development resources (or near zero for founders who learn the tools themselves), compared to $50,000 to $100,000 for custom-built MVPs with experienced developers, and $150,000-plus for agency-built products. The time-to-launch advantage is equally significant: a no-code MVP can be functional in two to four weeks; a custom-built equivalent typically takes two to four months minimum.

The decision framework for choosing between them is specific: use no-code tools if your core value does not require complex custom logic or algorithms, if you are non-technical and bootstrapping with limited runway, or if you need to validate demand in under four weeks. Use custom development if your product requires unique algorithms, proprietary data processing, or complex integrations that no-code platforms cannot support; if you plan to raise funding and need scalable architecture from the start; or if your core competitive advantage is in the technical implementation itself — if “how it works” is the product differentiation, not “what it does.” Eighty percent of startups report using MVPs to validate ideas — and a growing proportion of those are using no-code for the initial validation before investing in custom engineering.

AI-assisted development has further compressed the custom build timeline. Claude Code, GitHub Copilot, and similar coding agents can accelerate experienced developers’ output significantly, and can enable technically-comfortable founders who are not professional engineers to build functional MVPs without a dedicated engineering hire. The 2026 reality is that the technical barrier to building an MVP has never been lower — which means the competitive advantage increasingly lies not in the ability to build but in the clarity of the problem definition and the quality of the customer understanding that informs what is built.

Step Five: Launch to a Small, Specific Audience

The launch of an MVP is not a product launch in the traditional marketing sense. It is a controlled experiment with a specific audience, designed to generate the maximum amount of learning about the core hypothesis with the minimum number of users. The ideal early user group is not “everyone who might be interested” — it is a specific cohort of early adopters who experience the problem acutely, are predisposed to try new solutions, and will provide specific, actionable feedback rather than vague impressions.

The concierge onboarding approach — where a founder or team member personally walks each of the first 50 to 100 users through the product — generates feedback that automated tools consistently miss. The micro-frictions that cause users to drop out of the onboarding flow, the moments of confusion that the product design did not anticipate, the features that users immediately look for and cannot find — all of these are visible to a founder sitting alongside a user in an onboarding session in ways that no analytics dashboard can reveal. Concierge onboarding is slow and non-scalable by design. It is also the fastest way to understand what needs to change before investing in automated systems that scale a poor experience to a larger audience.

Companies that iterate based on user feedback within 30 days of launch are three times more likely to achieve product-market fit than those that wait longer. The 30-day iteration clock starts at first launch, not at some later point when the product feels more polished. Launching earlier with a narrower feature set and higher iteration velocity consistently produces better outcomes than launching later with a more complete product and slower iteration — because the most valuable information in the MVP phase comes from real users using the real product in the real conditions they actually face, not from founders anticipating what those conditions will be.

Step Six: Measure with Actionable Metrics

Metrics in the MVP phase serve one purpose: generating the evidence needed to make the pivot-or-persevere decision. Vanity metrics — total sign-ups, page views, social media followers — create the appearance of progress without informing decisions. Actionable metrics — activation rate, day-7 retention, weekly active users as a percentage of total registered users, net promoter score from specific cohorts — reveal the specific behaviour patterns that indicate whether the MVP is delivering genuine value.

The metric hierarchy for an early-stage MVP follows the AARRR framework. Acquisition (how many users are signing up, through which channels) tells you whether there is interest. Activation (what percentage of new users complete the core onboarding workflow and reach the aha moment) tells you whether the product delivers value clearly enough for new users to find it. Retention (what percentage of activated users return within seven and thirty days) tells you whether the value delivered is compelling enough to create habit. In the MVP phase, activation and retention are the metrics that matter most — they are the clearest signals of whether the core value proposition is working. High sign-up rates with low activation and retention indicate that the marketing is creating interest the product cannot fulfil. High activation and retention with low sign-up rates indicate a product that works well for the people who reach it, with an acquisition problem rather than a product problem.

Qualitative feedback — from user interviews, from in-product surveys at key moments, and from analysis of support conversations — complements the quantitative metrics with the explanatory layer that numbers alone cannot provide. Knowing that 40 percent of users do not complete the onboarding flow tells you there is a problem. Watching three users attempt the onboarding flow in a moderated session tells you which specific step is causing them to drop off and why. Both are required for confident decision-making about what to fix.

The Pivot-or-Persevere Decision

The pivot-or-persevere decision — the moment when the MVP’s measurement data is used to decide whether to continue on the current path or change fundamental direction — is the most consequential decision in the lean startup cycle and the one that most founders make too slowly. The instinct to give the current approach more time before concluding it is not working is understandable; the emotional investment in a specific product vision is real; and the discomfort of admitting that months of work needs to be redirected is significant. But the lean startup framework treats time spent continuing on a path that the data indicates is not working as the most expensive possible waste — more expensive than the cost of pivoting.

A pivot is not a failure. It is a course correction informed by validated learning — the application of what was learned from the current MVP to a new hypothesis about product, market, customer segment, or business model. Instagram pivoted from a location-based check-in app called Burbn to a photo-sharing app when the data showed that photo sharing was the only feature users were genuinely engaged with. Slack pivoted from an internal tool for a gaming company into a standalone communication platform when the founders recognised that the problem the tool solved — keeping distributed teams connected and informed in real time — was more valuable and more broadly applicable than the game they had originally been building. Twitter emerged from a pivot within a podcasting company called Odeo when Apple announced iTunes podcast support would eliminate Odeo’s market. These are not stories of failure — they are stories of the lean startup’s pivot-or-persevere decision functioning exactly as designed.

The pivot-or-persevere decision should be made on the basis of the validated learning data from the MVP cycle, not on the basis of how long the current approach has been pursued or how much has been invested in it. The data that indicates a pivot is needed: activation rates persistently below 30 percent despite targeted onboarding improvements; day-7 retention below 20 percent after multiple iteration cycles; no users describing the product as essential to them; customer interview feedback consistently identifying a different problem or a different solution approach as more valuable than what is currently built. Any of these signals, sustained across multiple measurement cycles, indicates that the current path is not converging toward product-market fit — and that the most valuable thing the team can do is redirect effort toward a new hypothesis.

The 2026 MVP: Faster, Cheaper, Harder to Ignore

The 2026 MVP landscape offers unprecedented speed and reduced cost for founders who use the available tools effectively. AI-assisted development, mature no-code platforms, and a rich ecosystem of API integrations — Stripe for payments, Firebase for backend infrastructure, Twilio for communication, Auth0 for authentication — mean that the raw engineering work of building a functional MVP has been commoditised. The bottleneck has shifted from “can we build this?” to “do we understand the problem well enough to know what to build?”

That shift places customer discovery, problem definition, and hypothesis clarity at the centre of MVP success in a way that was not as structurally necessary when building was harder. When it took six months and $150,000 to build an MVP, the investment in getting the problem definition right before building was obviously worthwhile. When it takes four weeks and $10,000, the temptation to start building immediately and adjust based on what the product teaches you is stronger — and is sometimes right. But the most efficient path from idea to product-market fit in 2026 combines the speed advantage of modern tools with the discipline of the lean startup method: clear problem definition, specific hypothesis, ruthless MVP scope, early launch to a specific audience, rigorous measurement, and the willingness to pivot when the data indicates the current path is not converging toward a product people genuinely need. The tools have changed; the method has not. And the method works.

0 Comments

No comments yet. Be the first to share your thoughts!